DLSS 4 Multi-Frame Generation and PlayStation PSSR

Three generated frames per real one and Sony's bespoke answer on the PS5 Pro.

This chapter is two stories about generations of frame generation hitting the market in 2024–2025: NVIDIA's DLSS 4 Multi-Frame Generation (PC, RTX 50-series) and Sony's PSSR PlayStaSation Spectral Super Resolution on the PlayStation 5 Pro (custom RDNA-based console hardware).

They are very different approaches to a similar problem: a closed, high-end console SoC with a fixed silicon budget vs. an uncapped PC ecosystem with the world's biggest neural-accelerator GPUs. The trade-offs each chose are illuminating.

DLSS 4 Multi-Frame Generation

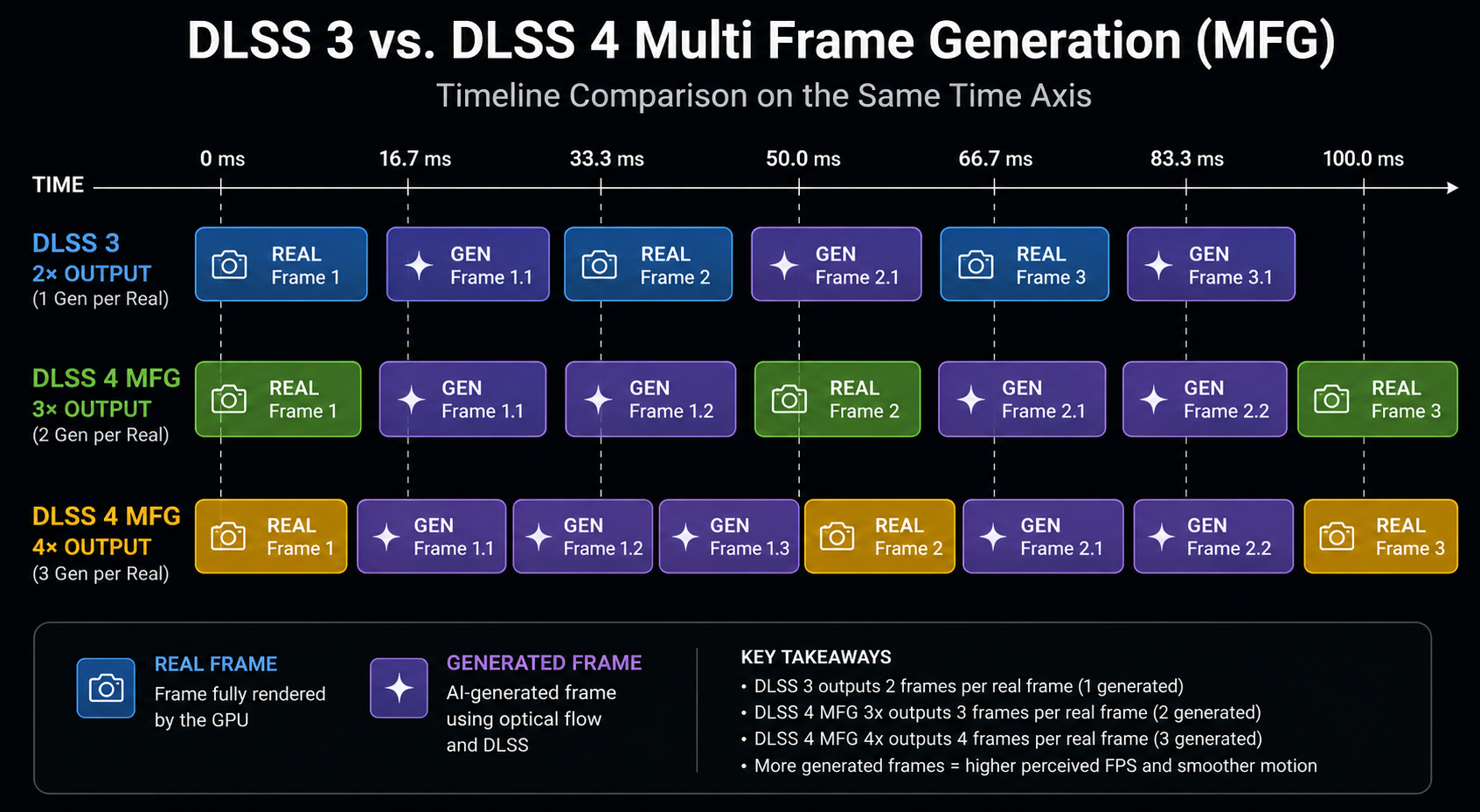

DLSS 3 inserts one generated frame between each pair of rendered frames doubling display rate. DLSS 4 extends this to three generated frames between each pair of rendered ones, 4× the display rate.

So at 60 rendered FPS, MFG can display at 240 FPS. At 80, it pushes 320. At 100, 400. The motivation is to feed the 480 Hz and 540 Hz OLED panels that are starting to ship; even the most powerful GPU cannot render 480 native frames per second of a modern game, but it can render 120 and have MFG paint the other 360.

Why this is harder than 2× FG

The interpolation problem gets significantly worse as you push more generated frames between real ones:

- The time gap between motion samples grows. At 60→240, each generated frame is 1/240 s from the next. The interpolation network has to extrapolate finer time slices, and small errors in motion estimation produce visible juddering.

- Errors compound. Three consecutive generated frames means an error in motion field affects three frames in a row, becoming easier to perceive as a sustained artifact.

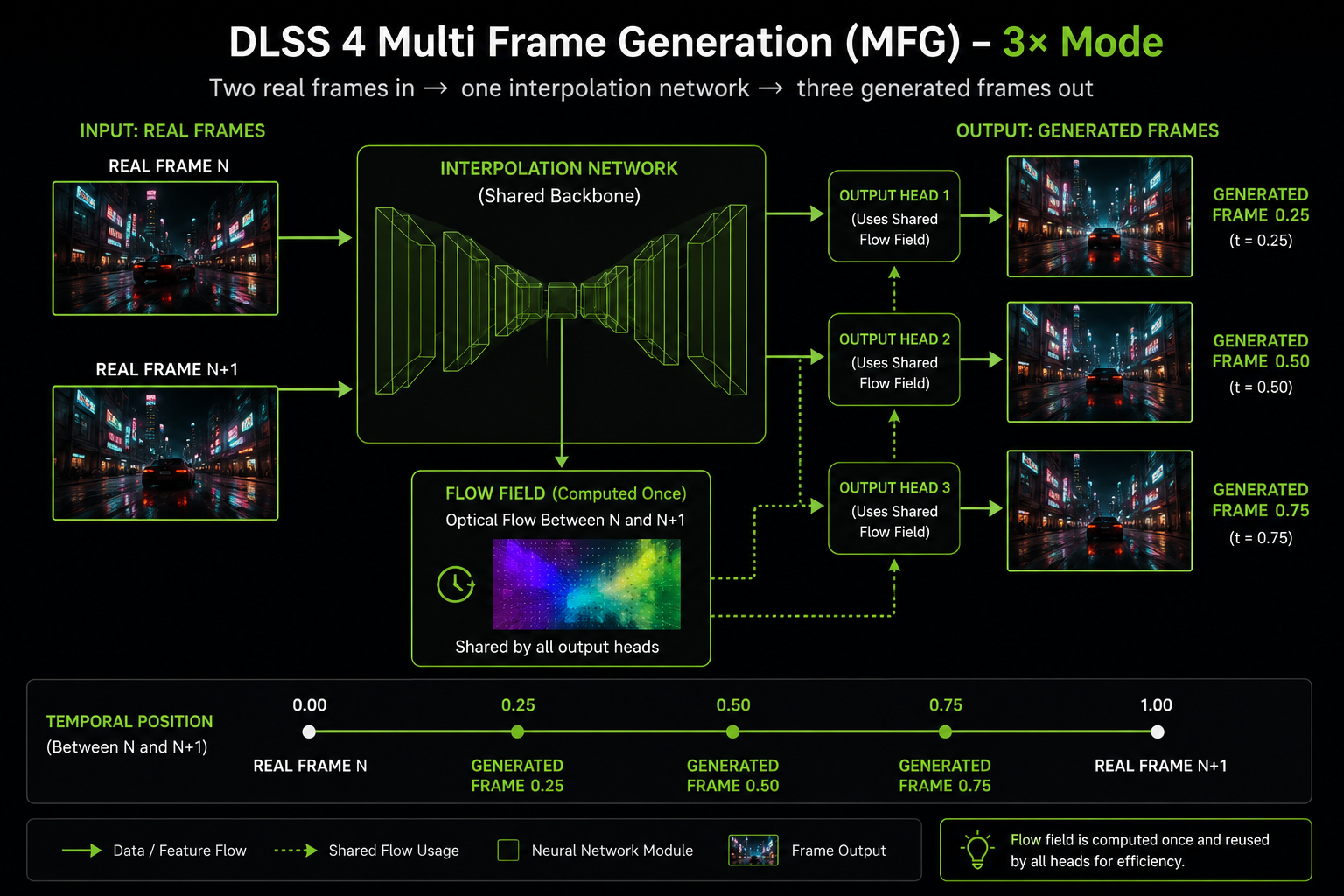

- The Optical Flow Accelerator's output is reused. Computing OF four times more often would blow the time budget, so the network has to share the flow field across three generated frames, each weighted to its own temporal position. Any flow error becomes visible three times.

DLSS 4's solution is a redesigned interpolation network a vision transformer, not the older CNN that takes the flow field once, predicts displacement fields for three target time positions, and produces all three frames in a single fused inference pass. The cost is roughly 1.5× the cost of single-frame FG, while producing 3× the generated frames.

The hardware gate

MFG launched gated to RTX 50-series (Blackwell) only. NVIDIA's stated reason: the new "AI Management Processor" on Blackwell handles the scheduling of multiple interleaved inference passes more efficiently than Ada's pipeline. Independent analysis suggests the actual model would run on Ada, but with worse frame-pacing and higher overhead per generated frame. As with DLSS 3 on Ada-only, this gate is partly technical and partly product segmentation.

PlayStation PSSR a different problem, a different solution

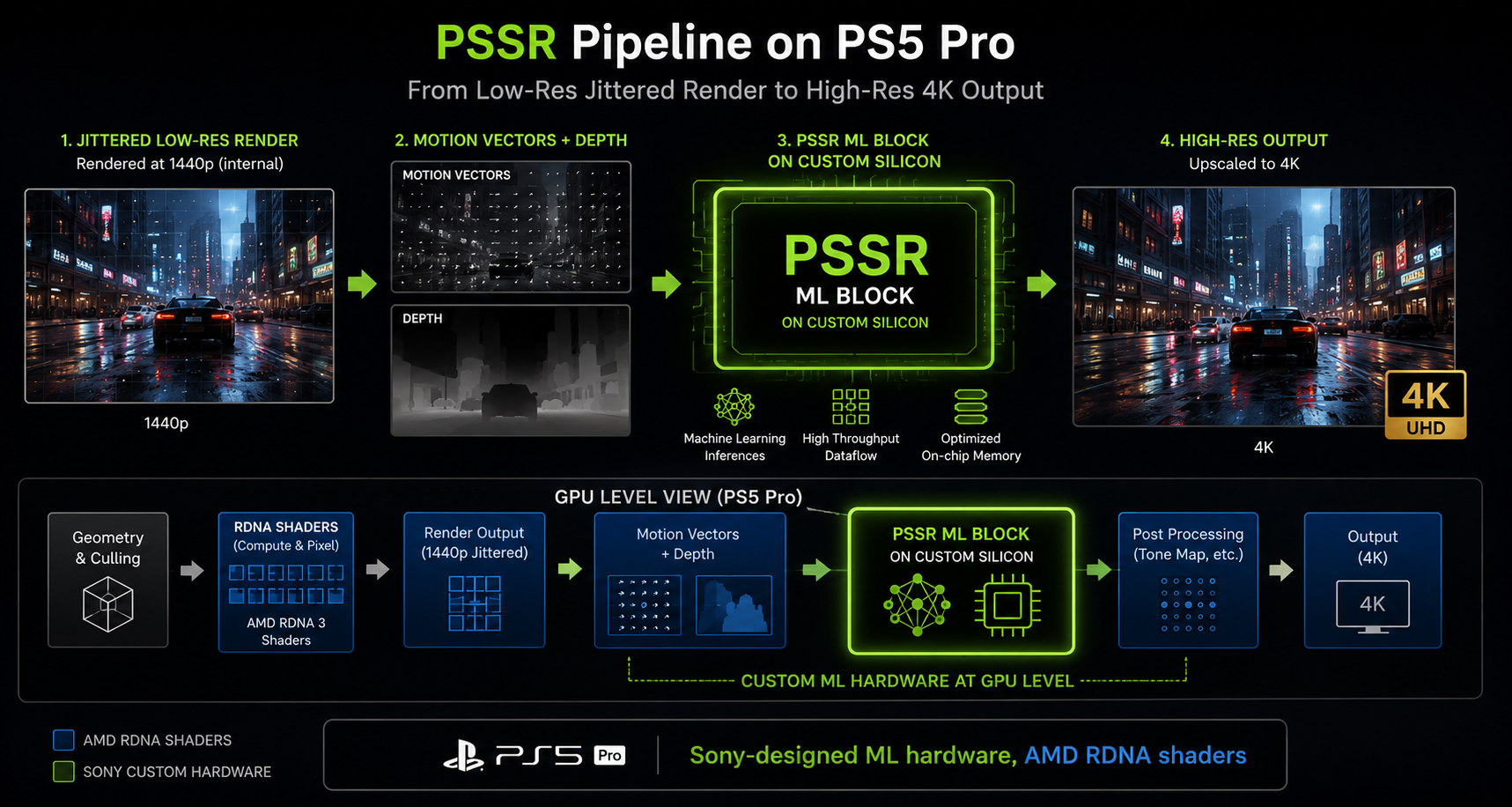

Sony's PSSR ships exclusively on the PlayStation 5 Pro (November 2024). It is a temporal AI super-resolution technique very much in the DLSS family same algorithm class but trained, optimised, and deployed on custom AMD silicon that Sony co-designed.

The PS5 Pro's GPU is roughly an RDNA 3 / RDNA 3.5 hybrid with custom hardware blocks for ML acceleration that AMD's off-the-shelf RDNA does not have. Specifically: a dedicated set of matrix-math units (Sony calls it "machine-learning hardware") that can run PSSR's neural network at a low millisecond cost. Without that custom silicon, an open RDNA 3 GPU would have to fall back to general shader cores for the same math, and the cost would be prohibitive.

What PSSR is

In feature terms, PSSR is closest to DLSS Super Resolution 2.x: a temporal upscaler that takes a low-resolution rendered frame plus depth, motion vectors, and a history buffer, and produces a high-resolution output. It does not currently do frame generation. Sony has said multi-frame and frame generation variants are on the roadmap, but PSSR as shipped is a Super Resolution-class technique, not a DLSS 3-class one.

A few specific design choices Sony made:

- Internal scale ratios are similar to DLSS Performance/Quality: render at roughly 1080p–1440p, output at 4K.

- The network is smaller than DLSS Super Resolution partly because the ML hardware on the PS5 Pro is much smaller than a 4090's Tensor Cores, partly because consoles have a strict, predictable frame budget. Sony tuned for ~2 ms inference at 4K output.

- It is trained on PlayStation games specifically, not generically. Sony curates the training set from internal first-party titles and partner-supplied data. This means PSSR's prior is biased toward the visual style of PS games generally helpful but it means new visual effects (e.g. unusual particle types) may need re-training.

- Engine integration is mandated by the PS5 Pro SDK: a game opting into "PS5 Pro Enhanced" must wire up jittered projection, motion vectors, and a depth buffer to PSSR's API. This is conceptually identical to DLSS integration but on PlayStation's APIs.

Why Sony built its own instead of using FSR

This is the core question. AMD's FSR 2/3 was already shipping cross-vendor and would have been a no-cost option for Sony.

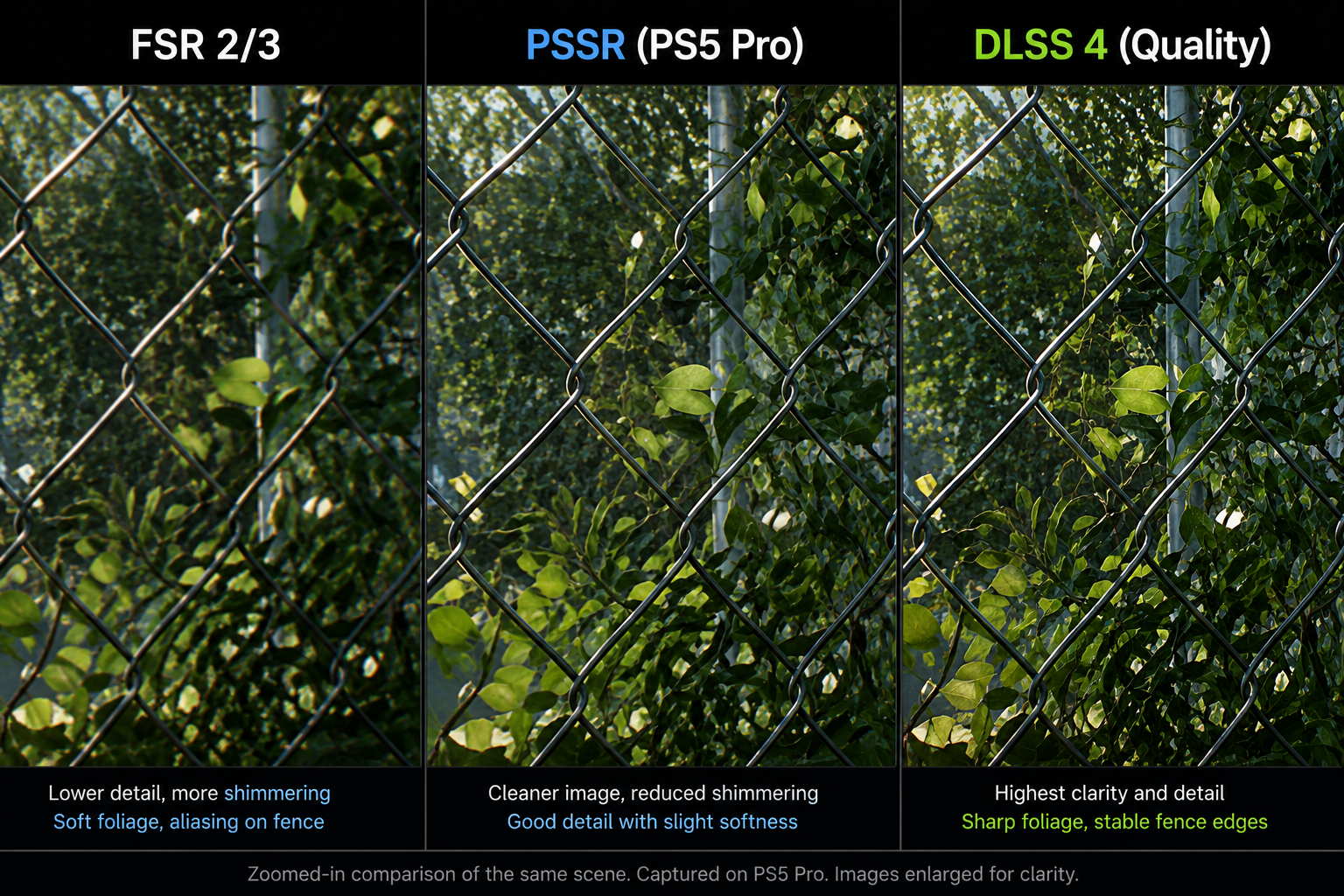

The answer is image quality. FSR 2/3 is not a neural-network upscaler it is a hand-tuned temporal upscaler that runs on general-purpose shader cores. It is good, but it is consistently outperformed by DLSS in independent comparison. Sony was building a premium-tier console specifically marketed on improved image quality, so they needed an upscaler in the quality class of DLSS, which meant accepting that:

- The hardware would need ML accelerators (FSR didn't need any). So they added custom silicon.

- They would need to train a neural network. So they built a training infrastructure and dataset.

- They would need per-game integration. So they made it part of the "PS5 Pro Enhanced" certification.

The result is the first non-PC platform with a genuine neural-network temporal upscaler. It is not as polished as DLSS 4 yet direct comparisons in titles available on both platforms (e.g. Alan Wake 2, Star Wars Outlaws) show PSSR is closer to DLSS 2 than DLSS 4 in image quality but Sony will iterate, just as NVIDIA has across DLSS 1, 2, 3, 4.

A note on PSSR's artifacts

Early PSSR implementations have shown:

- Particle shimmer: rain, snow, and small particles can flicker in motion. This is a training-distribution issue and will improve.

- Ghosting on transparent surfaces: glass, water. Same root cause as on DLSS (motion vectors don't describe transparency properly), but PSSR's smaller network has less capacity to compensate.

- Lower-frequency detail in foliage: PSSR is sharper than FSR but softer than DLSS 4 on small, repetitive features.

These are the same artifact classes DLSS 2 had at launch and they will follow the same improvement curve.

What the comparison teaches

Across the platforms in 2026, real-time reconstruction looks roughly like this:

| Technique | Vendor | Class | Frame Gen? | Hardware required |

|---|---|---|---|---|

| DLSS 4 SR + MFG | NVIDIA | Neural (transformer) | Up to 4× | RTX 50-series for MFG, RTX 20+ for SR |

| DLSS 3 | NVIDIA | Neural (CNN/transformer) | 2× | RTX 40-series for FG |

| FSR 4 | AMD | Neural | 2× | RDNA 4 + (cross-vendor possible) |

| FSR 3.1 | AMD | Hand-tuned + FG | 2× | Any modern GPU |

| FSR 2 | AMD | Hand-tuned | No | Any modern GPU |

| XeSS | Intel | Neural (CNN) | No (yet) | Intel Arc (full quality) / any GPU (DP4a fallback) |

| PSSR | Sony | Neural | No (yet) | PS5 Pro only |

Two patterns are clear. First, the industry is converging on neural temporal upscalers only AMD's older FSR generations resist it. Second, frame generation is now table stakes on PC but is still a roadmap item on consoles.

In the next chapter we'll look more deeply at FSR and XeSS the cross-vendor alternatives because their design constraints reveal what is hard and what is easy about doing this without proprietary silicon.