DLAA: DLSS Without Upscaling

The same network, fed native-res input. The best anti-aliasing in real-time graphics.

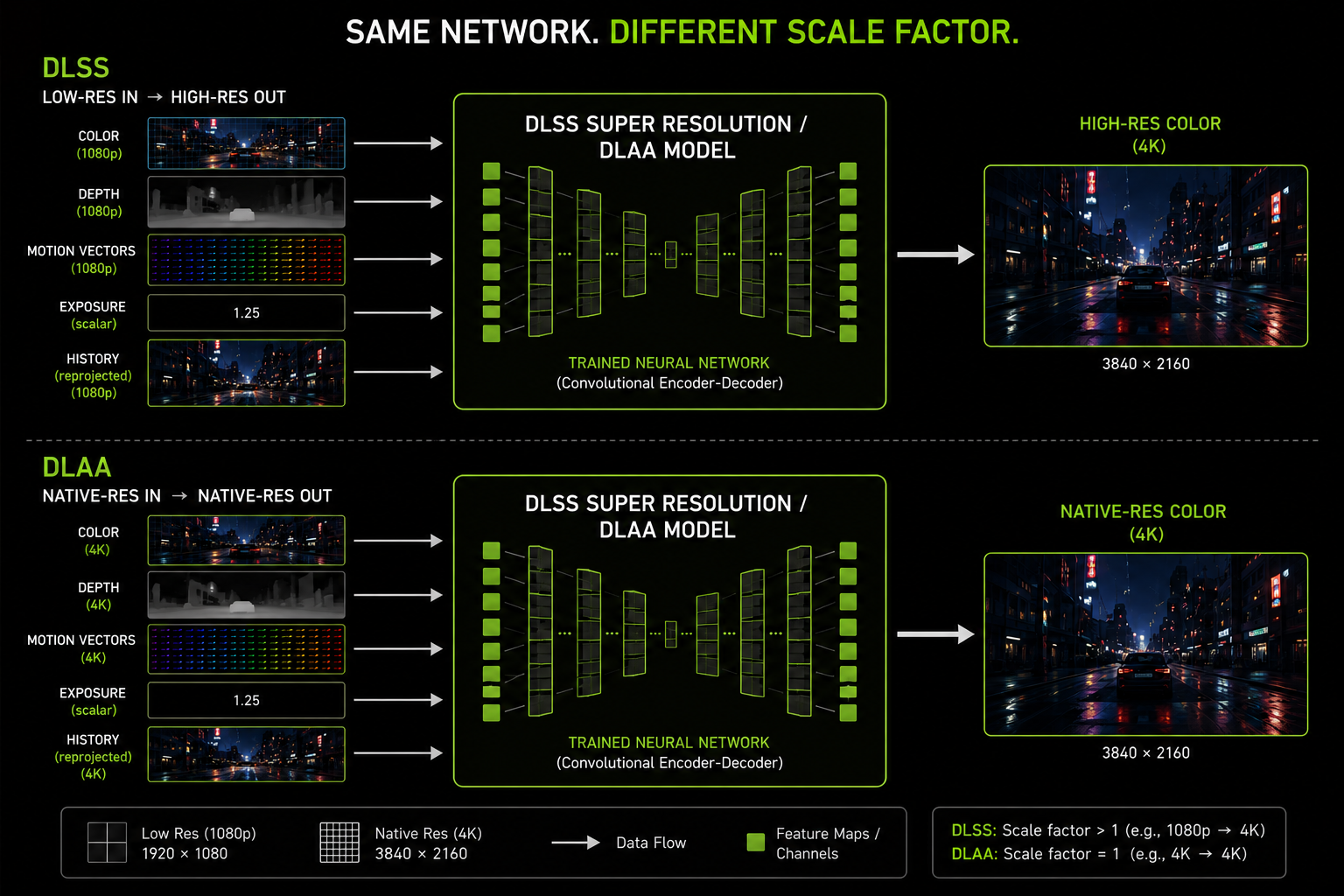

DLAA Deep Learning Anti-Aliasing sounds like a separate product. It is not. DLAA is DLSS Super Resolution with the input resolution equal to the output resolution. Same network weights, same code path, same Tensor Core kernel. The only difference is the scale factor is 1.0.

So why does it exist as a separate name? Because the use case is genuinely different.

What DLAA is for

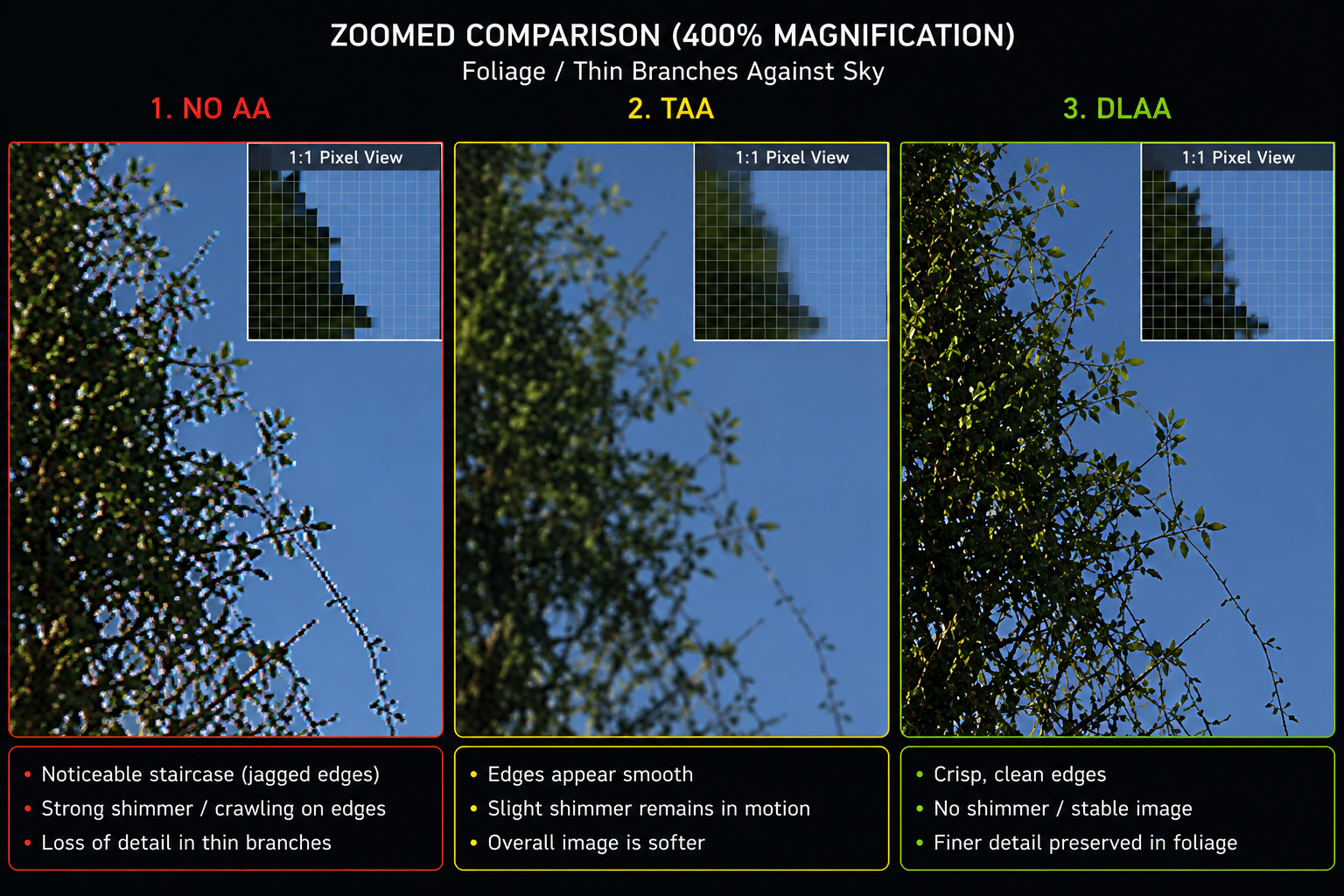

If you have GPU performance to spare and you do not need upscaling, DLAA gives you anti-aliasing that is better than TAA, better than MSAA (which you can't use anyway in deferred shading), and better than any post-process AA. It is, in 2026, the highest-quality anti-aliasing available in real-time graphics, full stop.

The mechanism is the same as DLSS temporal accumulation with sub-pixel jitter except that:

- The network receives a native-resolution color input that already has 1 sample per output pixel.

- Across 8–16 jittered frames, the network has effectively 8–16 samples per pixel to work with.

- The output is, in expectation, what a 16×SSAA pass would produce but at the cost of the same network forward pass, not 16× the rendering work.

Why it beats TAA at the same resolution

A well-tuned TAA implementation is already pretty good. DLAA is better because:

- The clamp logic is learned, not hand-coded. TAA's color clamping is a YCoCg neighborhood AABB a coarse heuristic. DLAA's history validation is a function the network learned from millions of examples; it can keep more history when history is correct, and reject more when it isn't.

- Thin features survive longer. TAA tends to drop sub-pixel features in motion. DLAA's network has learned what thin features look like and accumulates them more aggressively.

- No softness. TAA's blend factor + reprojection blur tends to soften the image; engines compensate with a post-sharpen. DLAA's reconstruction kernel is sharper to start with, so output sharpness is closer to native.

- Specular shimmer is suppressed. A common TAA failure is "shimmering specular highlights" on moving wet surfaces. DLAA is much better at this, because the network has seen specular shimmer in training and learned to filter it temporally.

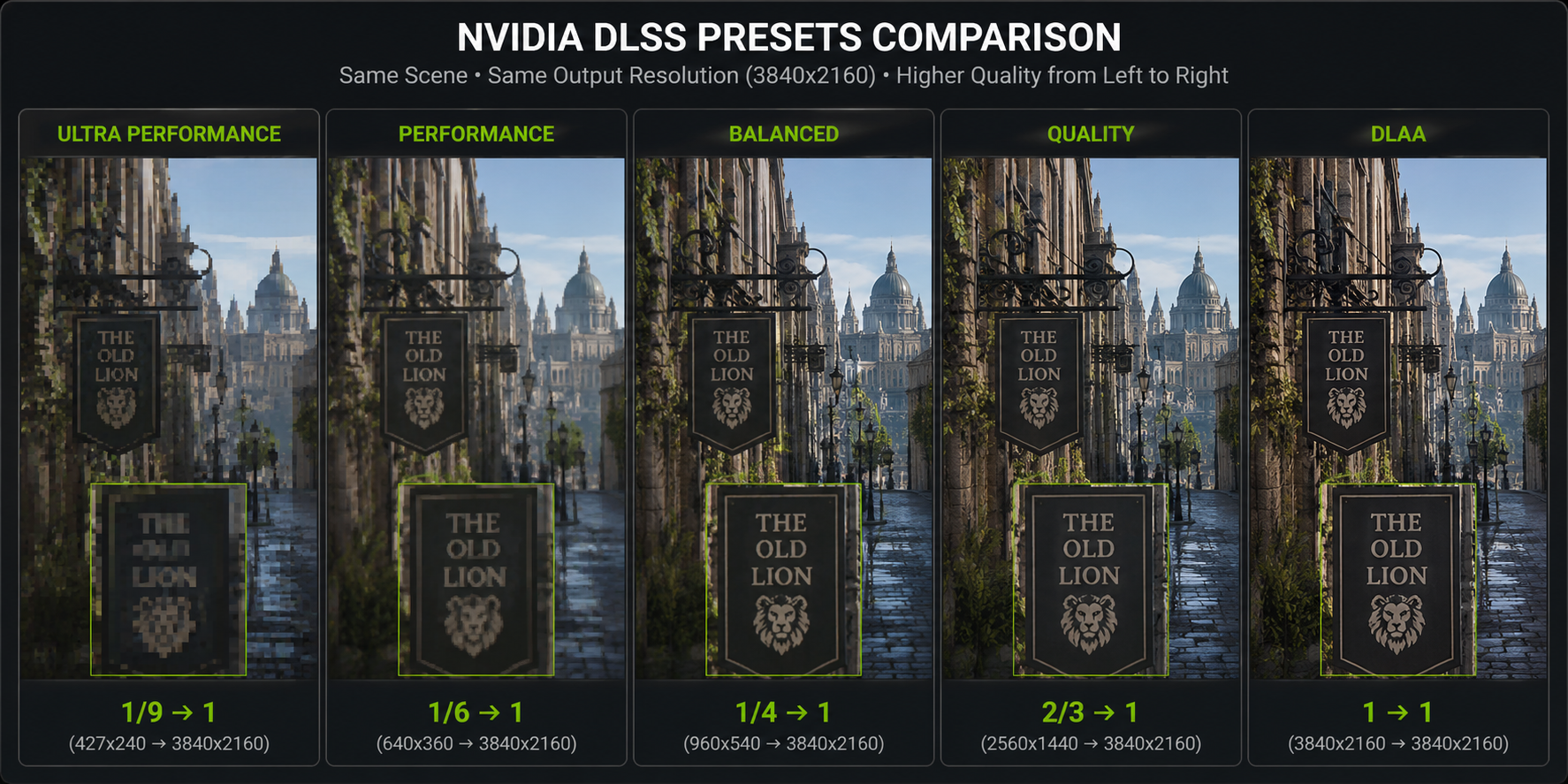

The trade-off is the cost: DLAA's network pass runs at full output resolution, so it is more expensive than DLSS at the same output res. On a 4090 rendering 4K, DLAA's overhead is around 2–4 ms, compared to ~1 ms for DLSS at the same output resolution (because DLSS's internal-resolution work is cheaper).

When you should use DLAA

A rule of thumb that works:

- Performance is fine, image quality is not → use DLAA.

- Performance is the problem → use DLSS at the highest quality preset you can afford.

If a game offers a DLAA option (and a growing number do Cyberpunk, Alan Wake 2, Star Wars Outlaws, etc.), and your framerate is already comfortably above your refresh rate, DLAA is almost always the right call. It uses your GPU's spare capacity to produce a sharper, cleaner image rather than wasting it on extra frames you don't need.

A historical note: DLSS "Quality at output" mode

Before DLAA was officially exposed, the same effect was achievable by forcing DLSS's internal resolution equal to the output resolution. Some power-users did this via hacked config files in DLSS-supporting games. NVIDIA eventually shipped it as a first-class option in DLSS 2.5.1 and gave it the DLAA brand name. So DLAA is, formally, just a DLSS preset but it has earned a separate name because the use case is different.

Why no one ships DLAA-only

You almost never see a game ship with DLAA but not DLSS. The reason is engineering economics: if your engine is correctly integrated with DLSS (jitter, motion vectors, history management, exposure, all wired up), then exposing DLAA is a one-line option just set the scale factor to 1.0. So every DLSS-supporting game can, in principle, support DLAA, and increasingly they do.

A side note: DLAA, anti-aliasing, and resolution scaling are the same problem

A final conceptual point that ties everything together: at the mathematical level, anti-aliasing and resolution scaling are the same operation. Both are integrating the radiance function over a pixel footprint. In one case the pixel is small (high resolution); in the other the pixel is large (low resolution). The number of samples per pixel and where they fall determines image quality.

DLAA, DLSS Quality, DLSS Performance, DLSS Ultra Performance, and even (in principle) a hypothetical "DLSS Super-Sampling" mode where input is higher than output, are all the same algorithm with different sampling densities. The network was trained to handle a wide range and it generalises across them. This is the unifying insight of modern reconstruction.

Now we move to the radically different problem: not reconstructing pixels, but reconstructing entire frames that the renderer never drew. That is frame generation, and it is next.