Why Frame Generation Exists

Monitors got faster than GPUs. Frame generation is the industry's answer.

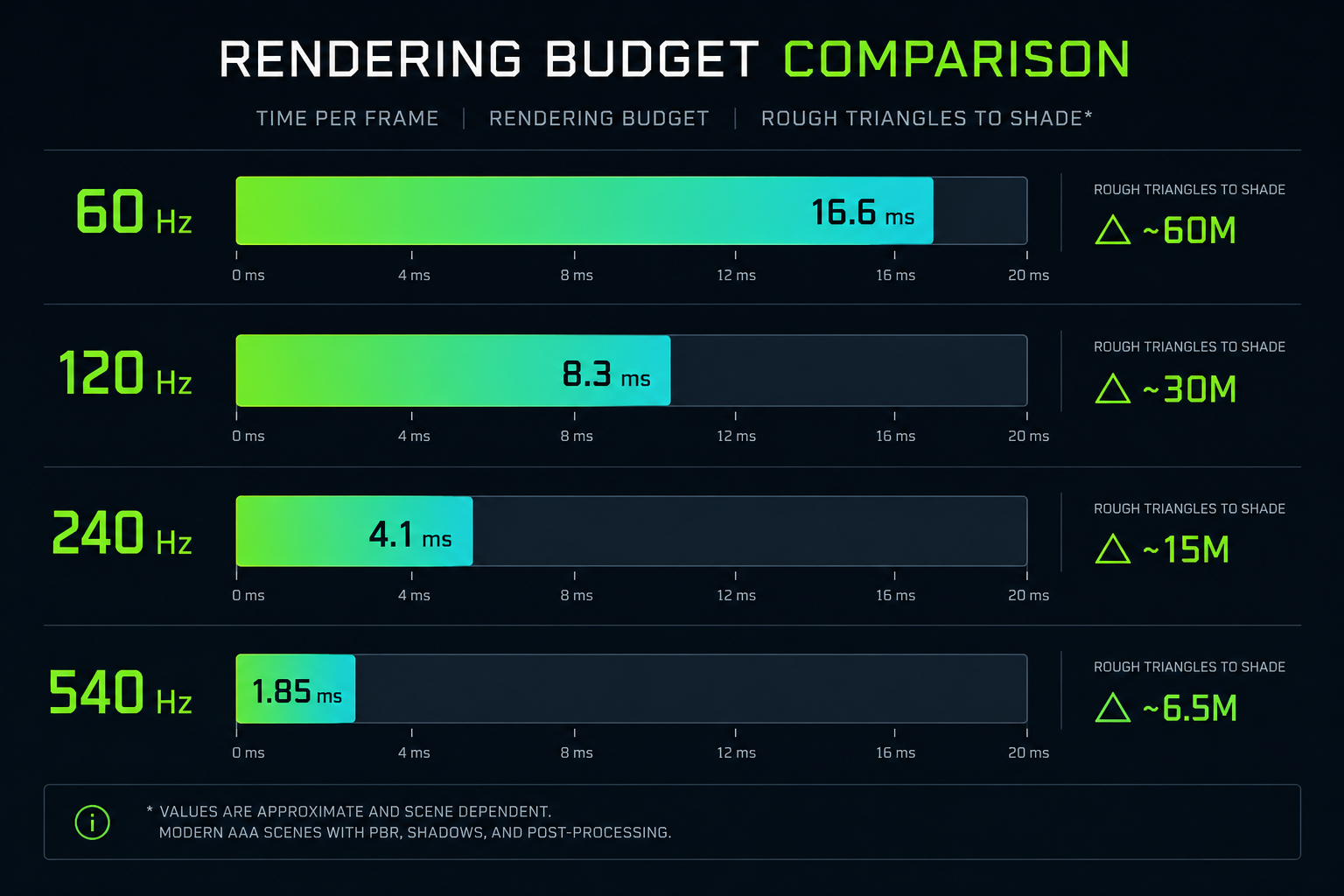

In 2018 a high-end gaming monitor refreshed at 144 Hz. By 2024, 240 Hz panels were mainstream and 360–540 Hz monitors were on the shelf. To feed those panels honestly, a GPU has to produce a complete, physically simulated, fully shaded frame every 1.85 milliseconds.

That is not happening. Not at 4K, not with path-traced lighting, not on hardware you can actually buy.

So the industry did something different. It stopped trying to render every frame from scratch and started reconstructing them first by upscaling from a lower resolution, then by inventing entirely new frames in between the real ones. That second trick is what we call frame generation, and it is the most disruptive change in real-time rendering since programmable shaders.

What this course is, and is not

This is a deep dive, not a settings guide. We are not going to tell you whether to use "Quality" or "Performance" mode in Cyberpunk 2077. We are going to tell you, in detail:

- What a GPU actually does between the moment you press a key and the moment a photon leaves your monitor.

- Why traditional anti-aliasing is fundamentally broken and how temporal accumulation quietly replaced it.

- How DLSS Super Resolution uses a neural network to reconstruct a 4K image from a 1080p render.

- Why DLAA is, mathematically, the same network running on a different input.

- How DLSS 3 Frame Generation synthesises a frame that was never rendered, using optical flow and motion vectors.

- How DLSS 4 Multi-Frame Generation pushes that idea to 3 generated frames per real one.

- What Sony's PSSR on the PlayStation 5 Pro does differently and why a console approach has to make different trade-offs.

- The role of AMD FSR 3 / FSR 4 and Intel XeSS.

- Why frame generation adds latency even when it raises FPS, and how Reflex and similar tricks claw it back.

- Where ghosting, smearing, and disocclusion artifacts come from at the algorithmic level.

By the end you will be able to read a developer's GDC talk on temporal upscaling, look at a screenshot of a game, and tell roughly what reconstruction technique was used, and explain to a friend with actual math behind it why a "240 fps" game is sometimes really a 60 fps game in disguise.

A note on what's "AI" here

Marketing uses the words AI, deep learning, and neural network interchangeably. In this course we will be specific. When NVIDIA says DLSS uses AI, what is actually running is a convolutional neural network sometimes a transformer that was trained offline on millions of pairs of (low-resolution input, high-resolution ground truth) images. At runtime it is just a pile of matrix multiplications on the Tensor Cores of your GPU. There is no reasoning, no "understanding" of the scene; there is a learned function mapping pixels to pixels, and it happens to be very good at it.

We will look at that function and what makes it work.

Prerequisites

You should be comfortable with:

- The idea that a GPU is a massively parallel processor.

- Basic linear algebra: vectors, matrices, dot products.

- What a pixel and a triangle are.

Everything else the rendering pipeline, motion vectors, temporal jitter, optical flow, tensor operations we will build from scratch.

Next we'll do a fast crash course in computer graphics, just enough that the rest of the series stands on something solid.